The limits of intelligence | Piece #3 Misaal

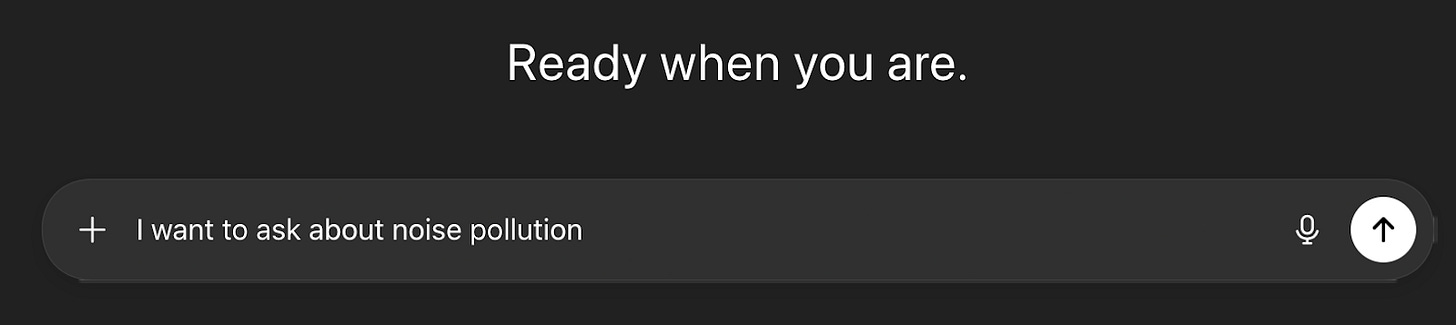

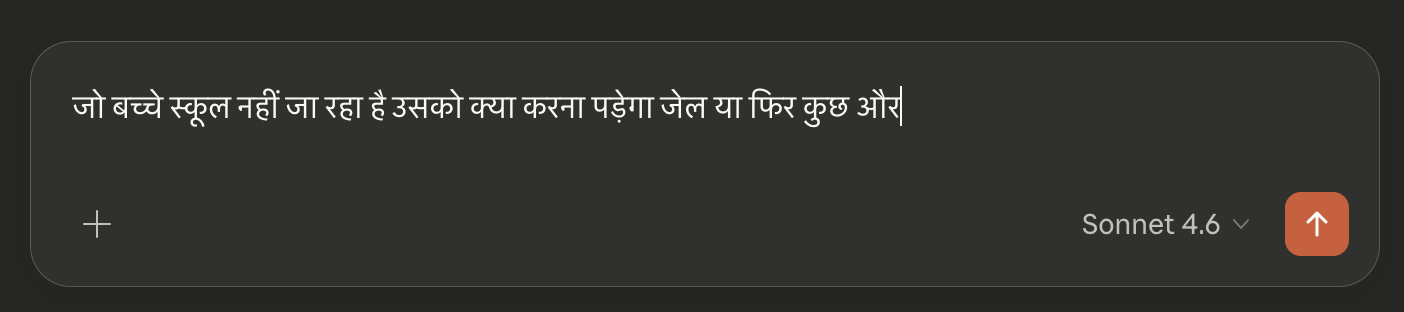

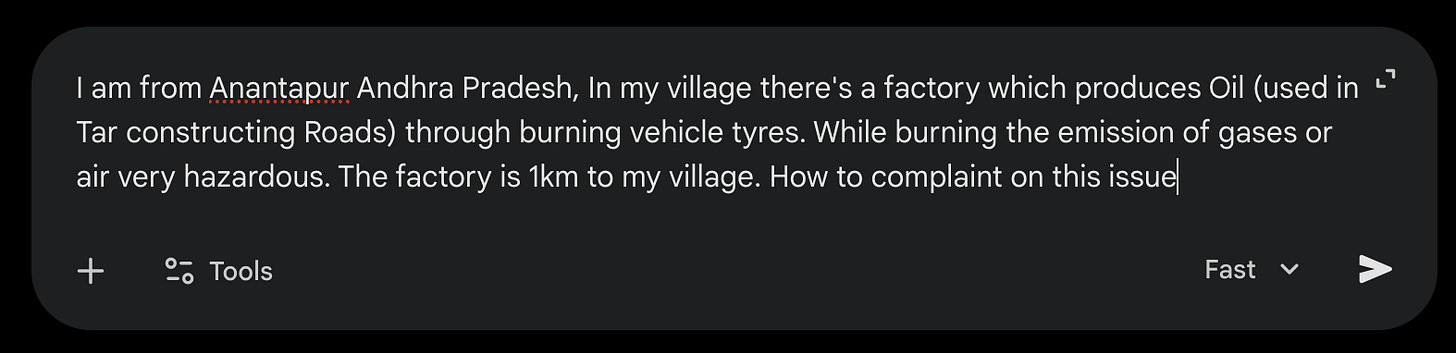

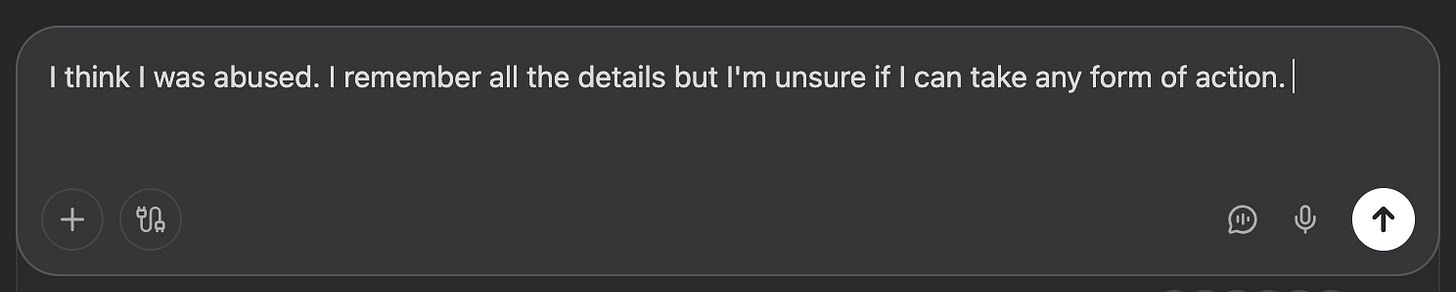

In rural and urban areas, we see a common phenomenon playing out: more and more people are turning to AI for help with their very real problems. Not because AI is the best option, but often because it's the only one. Legal aid is overstretched, lawyers are out of reach or expensive. And AI doesn't charge by the hour, it is available at midnight on a phone even with patchy internet.

AI responds quickly, politely, in complete sentences. It sounds reassuring, it sounds like it knows. But here is what tends to happen next: the answer comes back long and careful and full of legal-sounding language. It mentions relevant sections, it outlines general procedures. And the person reading it is left with something that is not quite wrong, but not quite right either - and not at all useful for their specific situation, in their state, with their set of facts.

So, if AI is so extraordinary at processing and explaining vast amounts of legal data, why is it so useless in real-world situations? Why is there such an enormous gap between what it gives you and what you actually need?

One of the underlying reasons is the way AI models are built and tested today. Essentially LLMs (Large Language Models, like GPT-5 by OpenAI, Opus 4.6 by Anthropic, Gemini 2.0 by Google) are evaluated against carefully constructed questions: complete and richly detailed. Very unlike how most of us actually ask for help.

AI responses are then measured almost entirely on factual accuracy. The outcome may be technically correct, but completely disconnected from the person asking: their background, their language, the social and cultural framework they live within.

In law and justice, that gap is not a minor flaw, it can be genuinely harmful.

Misaal was born

To fix this problem, we started MISAAL. MISAAL is a collective effort to surface what a truly quality AI response looks like to queries pertaining to law and justice in India.

We are building a community-led evaluation benchmark to measure how well AI performs on law and justice questions as asked by ordinary citizens of India. In the justice and law context without a benchmark AI systems can claim they perform well, with a benchmark - this performance is quantifiable.

We are co-creating this benchmark with civil society organizations, frontline justice workers and a large community that is reimagining law and justice in India. We are tapping into their lived experience and accumulated wisdom.

“When we will all see our role in society as servants, we will all light up the sky together like countless stars on a dark night.” - Vinoba Bhave

The benchmark is open-source and universally designed (means anybody can adapt it for community-led evaluations in other geographies and domains).

Measuring what matters

Misaal redefines “good performance” of AI from a citizen lens. It shifts the authority to evaluate AI away from the exclusive group of legal experts and academia, and toward the communities most affected by the justice gap.

What makes an AI answer actually useful? When we had early conversations with frontline paralegals and civil society organizations, the most common answer was not “accuracy.” It was: does it tell me what to do next? People also cared about empathy, accessibility, plain language, and cultural relevance. This time we’re looking to speak with more people across India, across languages, to surface what matters most to people in answers given by AI. We call these - evaluation principles.

At the same time, we’re sourcing 10,000 questions on law and justice from the organizations closest to the problem - legal aid clinics, paralegal networks, helplines, and civil society groups who’ve spent years sitting across the table from people who need the law and can’t reach it. These are real questions, about real problems, in the words people reach for. We’re gathering both text and voice. The key commitment is to truly represent a nation of many nations, and the full diversity of India’s community voices.

Once we have those questions, we feed them into AI models exactly as they are. That means we submit the set of queries, as it was asked, in the language it was asked, without making it more precise, to multiple LLMs and collect what each one says. The messiness is intentional. We want to see how AI performs on human questions, not on textbook ones.

The responses will then be processed and evaluated by our community council - civil society members, legal practitioners, and scored against the evaluation principles identified in the first step. Is this answer legally correct? Is it clear? Is it honest about its own limits? Does it acknowledge the person’s specific situation? Does it point toward a real next step?

In the end, we would like to create a platform for people to see which LLM performs best in their specific context.

Because these questions are not edge cases to be accounted for later. These are the questions of everyday life asked by normal citizens. Shaping technology systems, like AI, that can meet us where we are and respond to our inquiries honestly is work that belongs to all of us.

Misaal in Punjabi and Urdu means role model, exemplar or shining light.

M.I.S.A.A.L - the Multi-dimensional Intelligence Standard for Assessment of Socio-Legal AI Interactions is an effort to hear from thousands of users in diverse communities to surface the elements that truly represent a quality AI response to a query pertaining to law or justice in India